Optimizing RCA and FMEA in Health Care

“Failure Mode and Effect Analysis” (FMEA) and “Root Cause Analysis” (RCA) are becoming commonplace terms in work environments and in the literature. This article will demonstrate that these terms, while seemingly generic references to regulatory compliance, actually elicit various interpretations from individuals. Therefore, applications of FMEAs and RCAs will be equally disjointed, along with the inconsistency of the analysis results. A case history will demonstrate how new technologies can expedite comprehensive analyses.

Finally, this article will look into the purpose and intent of using such technologies in health care. Could the desire for regulatory compliance overshadow the primary objective of increasing patient safety? Are such analysis tools value-added? Can they really contribute to improving the bottom line?

While relatively new to the health care world, FMEA and RCA are well established in other industries. Many in health care might guess that these terms come from general industry, as they sound like something an engineer would create. Almost automatically a stereotype of the term emerges and it may include paradigms such as:

- They are too cumbersome for health care;

- They are passing fads, trendy acronym-like terms;

- Only a few in the organization understand them;

- They signify yet another compliance burden placed on risk managers by the Joint Commission on Accreditation of Healthcare Organizations (JCAHO).

Regardless of whether the paradigms are correct, they represent reality if some risk managers perceive them in those ways. These risk managers will dismiss these tools based on flawed perceptions, errors will occur and undesirable consequences will result.

The difference between FMEA and RCA

Many risk managers first heard about FMEA and RCA when JCAHO released its Leadership Standards and Elements of Performance Guidelines in July 2002.(1) Also in July 2002, ASHRM published

a whitepaper/monograph titled “Strategies and Tips for Maximizing Failure Mode & Effect Analysis in Your Organization.”(2)

So what is the difference between FMEA and RCA? The overall intent of these terms is as follows:

- FMEA is a team-based, systematic and proactive approach for

identifying the ways that a process or design can fail, why it might fail, and how it can be made safer. The purpose of performing an FMEA for JCAHO was to identify where and when possible system failures could occur and to prevent those problems before they happen. If a particular failure could not be prevented, then the goal would be to prevent the issue from affecting health care organizations in the accreditation process.(3) - RCA is a process, usually reactive, for identifying the basic or causal factors that underlie variation in performance and which can produce unexpected and undesired adverse outcomes. Usually, a combination of root causes sets the stage for failure.(4)

These are admirable ideas and are moves in the right direction to change the way people see their work processes. However, there is no standard analysis methodology. Which one is appropriate for health care?

The introduction of technology to conduct analyses

Discussions of analyses may include tools like “5 whys” and “the fishbone technique,” which have become popular through the introduction of Total Quality (TQ) efforts.

The fishbone technique is an analysis tool that provides a systematic way of looking at effects and the causes that create or contribute to those effects. Because of the function of the fishbone diagram, it may be referred to as a cause-and-effect diagram. The design looks much like the skeleton of a fish. The value of the fish- bone diagram is to assist teams in categorizing the many potential causes of problems or issues in an orderly way and in identifying root causes.(5)

The 5 whys technique suggests that, by repeatedly asking “Why?” (five times is a good rule of thumb), you can peel away the layers of symptoms that lead to the root cause of a problem. Very often the ostensible reason for a problem will lead to another question. Although this technique is called 5 whys, you may find that you will need to ask the question fewer or more times than five before the issue related to a problem is uncovered.(6)

What is attractive about these analysis systems as opposed to other more disciplined approaches?

Typically:

- They do not require evidence to support their hypotheses;

- They can be done by individuals or teams;

- They are usually quick and inexpensive in providing an answer; and

- They comply with guidelines.

However, mere compliance with regulations, guidelines and standards does not necessarily make patients safer.

Using a tool like the 5 whys for something as serious as a reviewable sentinel event may leave serious contributing causes overlooked. While the concept of the 5 whys approach is easy to understand, its premise suggests that “a single root cause” exists for all events, which is simply not true.

Using tools that appear to be the least expensive, easiest and compliant with regulations may not be in the best interest of patient safety. The most appropriate tools for conducting RCA for sentinel events are those that are evidence-based and team-oriented and look at all possibilities (as opposed just examining the most likely).

What are the objectives of analyses?

When considering which analysis approach is best for an organization, risk managers must first define the objective of the effort and define success. Most would argue that the sole purpose of an RCA is to eliminate the risk of recurrence of a like event for the same reasons. This is both admirable and desirable. However, there can be loftier goals that pertain to learning.

When people learn about the errors that linked up to cause such events, they will become more aware of their decisions and possible consequences.

Successful RCAs should be the tools that are used for educating peers in exactly what caused the adverse outcome. When people learn about the errors that linked up to cause such events, they will become more aware of their decisions and possible consequences. This is the single most important benefit from reactive RCA as well as proactive FMEA: that health care workers can learn how to make better decisions and, in the process, raise their knowledge and skill levels in the performance of their responsibilities.

Traditional manual analysis approaches can take three to six months. They involve about 50 percent administrative work, simply collecting the information, transcribing sticky notes and easel pad works of art, organizing the information into reports and presenting PowerPoint presentations to management.

Technology is available today that uses software programs to expedite these tasks, thus cutting the cycle time of an analysis in half or more and freeing up the time of risk managers to do other tasks. Once an analysis is recorded in a password-protected data- base, it is possible to effectively communicate the results with other authorized analysts electronically.

Computer software can allow the creation of a “knowledge base” that users can easily search on various parameters and see if others have conducted similar analyses. This way, risk managers may be able to learn from others’ experience. (Controls can allow lead analysts to publish analyses after they have been approved by Legal.) This is the benefit of successfully sharing such information, providing it is used in accordance with state peer review protections.

Without standardization in methodology, it’s difficult to compare conclusions of analyses. Reports using different methods are inconsistent and can be confusing to management. Another benefit of software solutions is that they can offset this drawback and provide comprehensiveness and consistency to such efforts.

Where does failure originate?

The cost for a good software suite can range in price from $3,000- $6,000, depending on the volume of users and whether desktop or network applications are used. From the financial perspective, it must be considered whether FMEA and RCA are value-added activities. If it can be demonstrated that such analyses increase patient safety while improving the bottom line, it will become clear that such activities can aid in reducing reactive work and allow more time for more proactive risk management.

Many try to place values on the cost of poor judgment or human error. This is a difficult, if not impossible, task. Why? Human errors trigger physical consequences, which ultimately lead to adverse out- comes of some type and magnitude. It is these adverse outcomes that usually carry the price tags that everyone remembers, not the individual error that was a contributor.

Many do not understand the mechanics of failure, and that is why their analysis efforts fail. The concepts of FMEA and RCA do not only apply in a certain industry on certain types of events. Rather, they are about the human thought process. The nature of the adverse outcome should be irrelevant. The common denominator is that a human being is trying to solve it.

The same thought process used to determine why an Adverse Drug Event (ADE) occurs also can be used to investigate why a plane crashes, why packages are late for delivery services and why customer complaints occur. The cause-and-effect relationships that lead to these outcomes are what analysis can identify.

How does the chain begin?

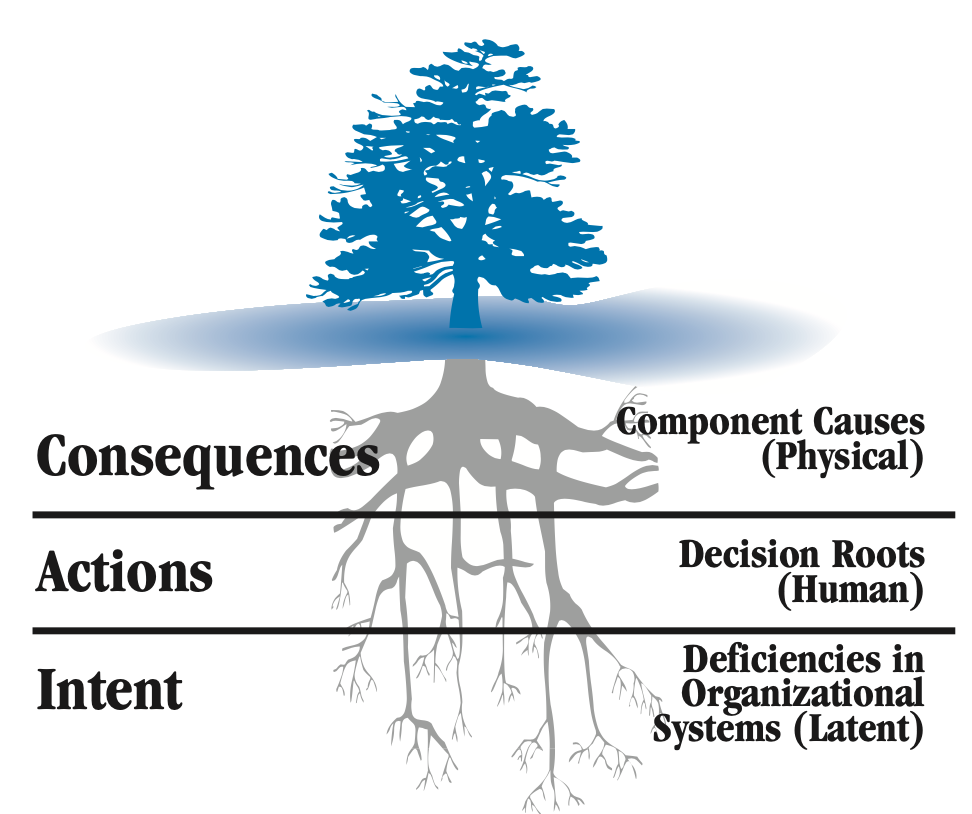

FIGURE 1: The three levels of cause

The logic tree root system depicts the origin of failure.

Adverse outcomes in environments can often be the results of errors of omission or commission by the human being. Either someone did something he or she wasn’t supposed to do (e.g., dispensed wrong dose of medication), or he or she was supposed to do some- thing and didn’t do it (delayed treatment in the ED). At this level, this is what is referred to as the “human roots” or “decision errors.” If an analysis concludes at this level, it is often referred to as “witch hunting” and is indicative of a “blame” culture. Analysis used this way will not only fail but will be counterproductive because people will choose to withhold vital information.

When people make decisions, they use an internal knowledge base from which to make that choice. This internal logic system banks on information from organizational systems, experience, training and education to help make the right choice. However, when these systems themselves are flawed, we make errors – decisions based on bad information. Organizational systems are like our policies, procedures, training practices and purchasing habits.

As the saying goes, “Practice makes permanent, not perfect.” This is aptly applied to many on-the-job training situations. If the person showing someone how to do a task is not doing it properly, the student has just learned how to do the task wrong consistently.

Decisions based on bad information trigger the first of a number of consequences that become physical or perceptible in nature. In other words, once a decision is made to do or not do something,

it triggers some change in the environment that can be seen, heard, felt, smelled or tasted. If change sequences are not caught and stopped, the result can be an adverse outcome.

James Reason coined the term “Swiss cheese model” to capture this specific sequence of events.(7) He also uses the terms “intent,” “actions” and “consequences,” which correlate to latent, human and physical roots. Therefore, most decisions are made with a good intent. Based on this intent, the decision is made to either take action or not. This decision triggers a series of physical consequences to occur until it is either caught by someone (stopping the chain) or it results in an undesirable outcome.

Because of this sequence, it’s understood that while determining the costs of actual errors is likely not plausible, determining the cost of their consequences is. This is how a business case can be built.

Converting analysis results to financial performance

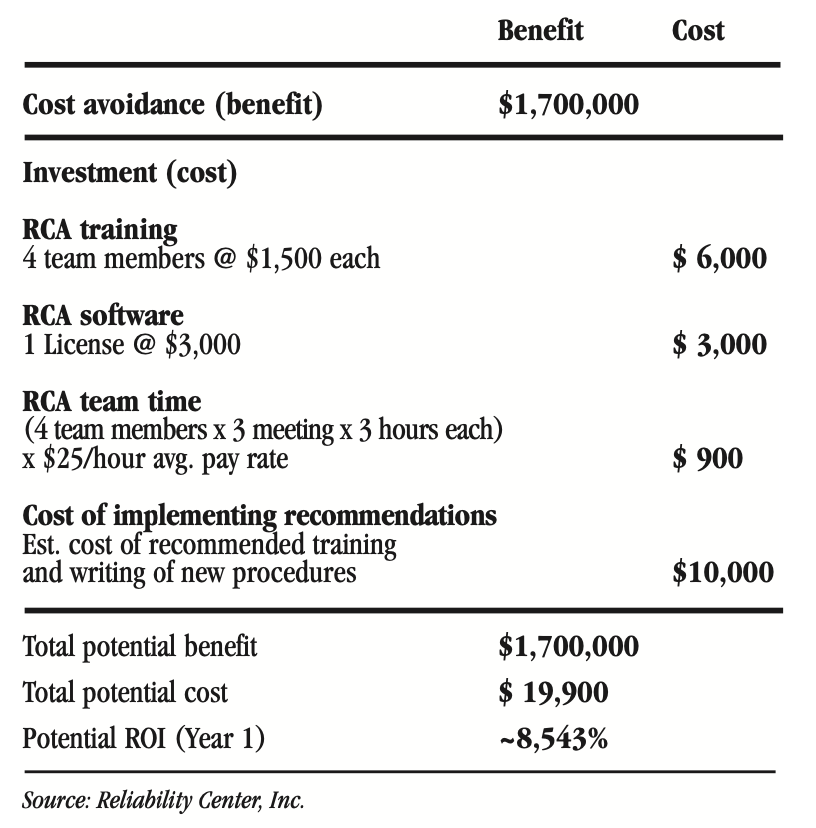

To illustrate, let’s say a reviewable sentinel event occurred in which a patient died from an allergic reaction to medication. The RCA confirms that, due to corporate mandates to cut the operating budgets by 10 percent, a pharmacist decided to curb the scope of the formulary by cutting back on the classifications of Vancomycin. (Obviously, there would be other root causes identified, as well.)

In this case, the family files a claim and is awarded $1.7 million. How can a business case be made to show that the RCA was worth it? The cost avoidance value of $1.7 million is obvious. Given everything constant, if nothing is done we can expect another like incident.

FIGURE 2: RCA cost-benefit scenario

This scenario leads to this question: Is the organization willing to spend about $20,000 to correct a flawed system that will eventually cause one or more like occurrences in the future that could result in additional future claims averaging $1.7 million.

Many will argue that because such “potential events” did not occur, that claiming them as a savings is not valid. These advocates must realize that decision errors are the result of flawed organiza- tional systems. If the system is not corrected, the patient is no safer and at higher risk of recurrence. Simply reprimanding a person who makes a poor decision, without understanding the basis of the decision, will not make the real root causes (system flaws) go away. Organizational systems are not typically put in place for a single person to use – many use them. Therefore, the risk of recurrence somewhere else in the organization is still high.

CONCLUSION

When examining the use of FMEA and RCA in health care, it should be understood that patient safety and the bottom line are directly proportional. As patient safety increases, adverse outcomes decrease. As adverse outcomes decrease, the following positive results may be seen:

- Claims decrease

- Insurance premiums decrease

- Work-hours are freed up for productive/proactive tasks easing the short-staffing burden as the reactive work diminishes

- Lengths of stay are decreased

- Legal fees are decreased

- Use of additional supplies and materials decreases

- Morale is increased and stress decreased

- Reputation is improved in the community

An organization will realize that progress is being made when

it starts doing such analyses on its own instead of only when the regulatory agencies require them. When an organization gains control of its operations, there will tend to be fewer sporadic types of events, such as the reviewable sentinel events. As this trend progresses, time that analysts would have spent on attending to such high visibility events can instead be focused on the more chronic type of events (precursors to sporadic) that ordinarily would not get such attention. From an organizational development standpoint, the move toward proaction will be inversely proportional to the slide back of reaction.

In short, organizations will do FMEAs and RCAs because they want to do them instead of because they have to do them.

RCA/FMEA Healthcare Example

EVENT FOLLOWING PATIENT TRANSFER PROMPTS USE OF RCA SYSTEM

A 71-year-old patient was transferred for post-embolic stroke rehabilitation from an acute care teaching hospital to a second acute care hospital in the same health care system. Six hours after admission to the second facility, the patient suffered an unexpected brain hemorrhage.

Later, a team was chartered to identify the root causes of this event – including any management systems deficiencies. Appropriate recom- mendations addressing identified root causes would be communicated to management for rapid approval and implementation.

The root cause analysis (RCA) team included the risk coordinator (team leader), director of risk management/quality assurance, quality coordinator, director of pharmacy, chief of medicine, nurse manager-rehabilitation and chief of rehabilitation.

METHODOLOGY

To expedite the process, the accepting transfer hospital decided to use RCA software. It selected Reliability Center, Inc.’s PROACT® software, which is named for its methodology: PReserve Incident Data, Order the Analysis Team and Its Members, Analyze the Incident Data with the Team, Communicate Findings and Recommendations, Track for Bottom-Line Improvement.

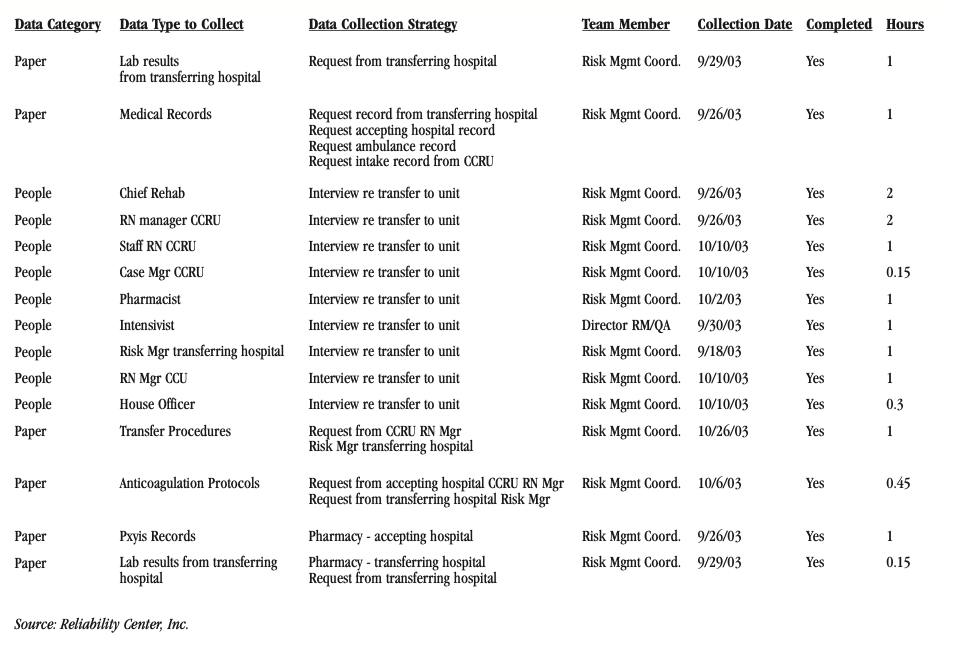

Data collection efforts involved developing strategies to collect some of the following information related to the incident:

FIGURE 1: Sample of data collection tasks assigned

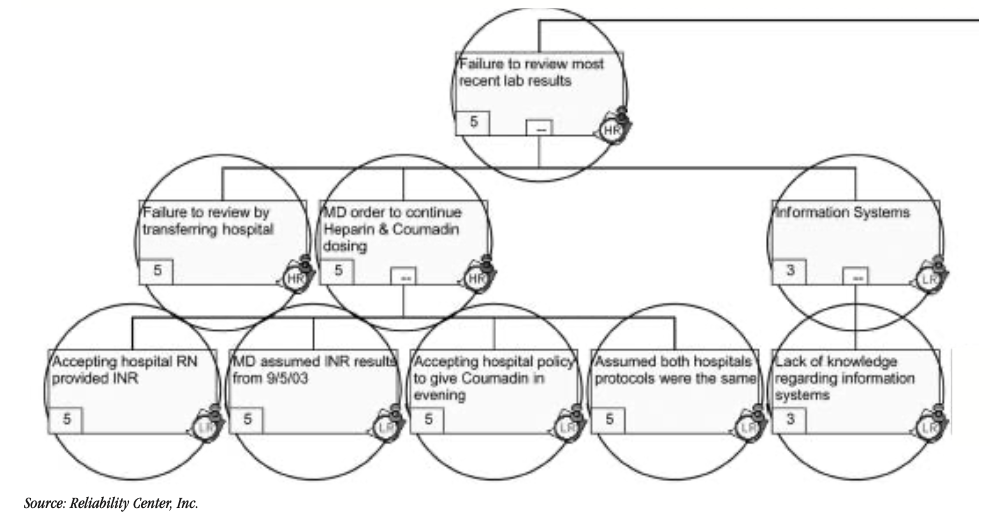

After the team members collected data, they put the pieces of the puzzle together using the facts (data collected). At this point, the team used the logic tree tool excerpted in Figure 2 to depict the cause-and-effect relationships that led up to the undesirable outcome.

FIGURE 2: Brain hemorrhage logic tree (excerpt)

Key: HR = Human decision roots; LR = Latent/organization roots

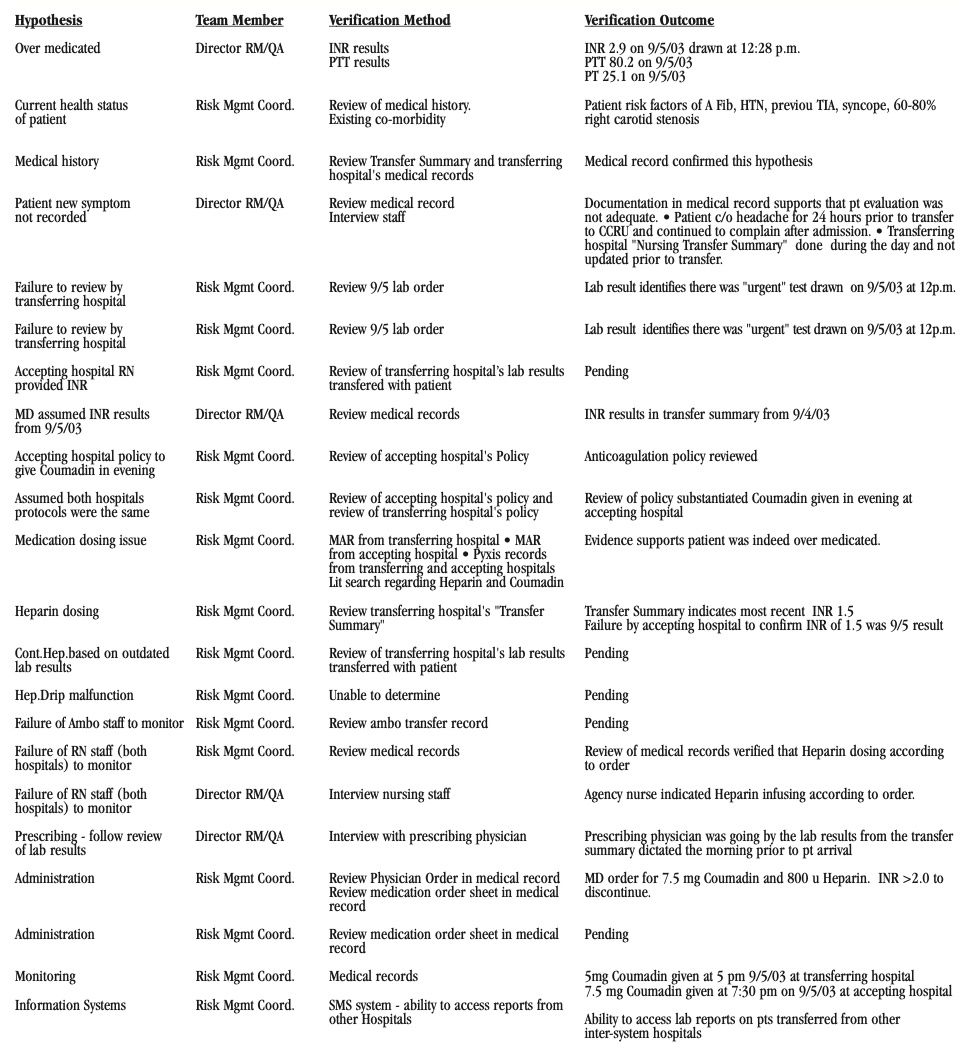

As part of the RCA methodology, each hypothesis was tested using the evidence or data collected. All false hypotheses received an X and a 0 rating indicating that evidence supported that they were absolutely not true. Hypotheses that proved to be true became facts and were rated “5” to indicate that evidence supported them as being absolutely true. Any hypotheses with a rating between 0 and 5 indicated an uncertainty due to the lack of credible data available.

The following Figure 3 demonstrates the verification methods used for each hypothesis and the resulting outcomes (if completed).

FIGURE 3: Brain hemorrhage logic tree (excerpt)

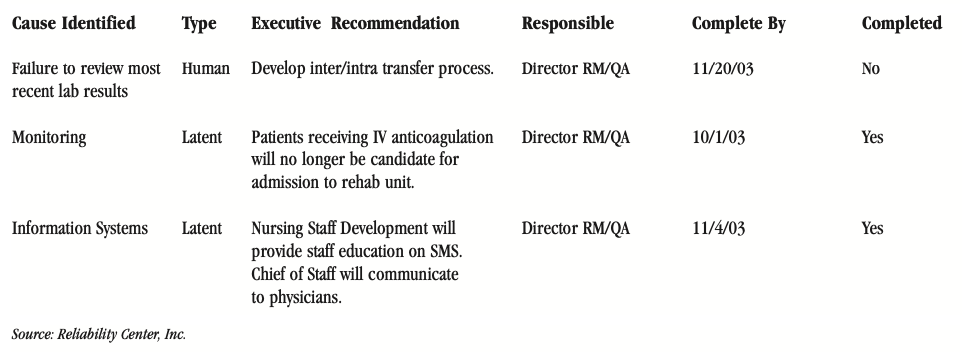

Based on the identified physical, human and latent root causes, the following executive summary of recommendations was developed. (Figure 4) This matrix summarizes the key root causes identified, their root cause type, their recommended solutions, their estimated completion date and whether the recommendation implementation was completed as of this writing.

FIGURE 4: RCA executive summary recommendations

REFERENCES

- Joint Commission on Accreditation of Healthcare Organizations (JCAHO) 2002 Hospital Accreditation Standard, LD 5.2, p. 200-201.

- American Society for Healthcare Risk Management. “Strategies and Tips for Maximizing Failure Mode & Effect Analysis in Your Organization.” Monograph. July 2002. www.ashrm.org (Tools & Products, Monographs)

- Joint Commission Perspectives, January 2004, Vol. 24, No. 1

- Joint Commission on Accreditation of Healthcare Organizations and American Society for Healthcare Risk Management. Accreditation Issues for Risk Managers. Joint Commission Resources: Oakbrook Terrace, IL. 2004.

- North Carolina Department of Environment and Natural Resources (DENR) Office of Organizational Excellence. Accessed at http://quality.enr.state.nc.us/tools/fishbone.htm.

- iSixSigma. Accessed at http://www.isixsigma.com/dictionary/5_Whys-377.htm

- Reason, J. Human Error: Models and Management. Cambridge Press. 1990.

CONCLUSION

Both facilities assumed that their Coumadin dosing schedules were identical. Through RCA, however, this was determined to be a false assumption. In fact, the patient who had received a 5mg dosage of Coumadin prior to the transfer received another 7.5mg dosage within 2 1/2 hours. A series of miscommunications and misinterpretations as to test results also contributed to this adverse outcome as illustrated in the logic tree (excepted in Figure 2) and associated verification logs (excepted in Figure 3).

About the Author

Robert (Bob) J. Latino is former CEO of Reliability Center, Inc. a company that helps teams and companies do RCAs with excellence. Bob has been facilitating RCA and FMEA analyses with his clientele around the world for over 35 years and has taught over 10,000 students in the PROACT® methodology.

Bob is co-author of numerous articles and has led seminars and workshops on FMEA, Opportunity Analysis and RCA, as well as co-designer of the award winning PROACT® Investigation Management Software solution. He has authored or co-authored six (6) books related to RCA and Reliability in both manufacturing and in healthcare and is a frequent speaker on the topic at domestic and international trade conferences.

Bob has applied the PROACT® methodology to a diverse set of problems and industries, including a published paper in the field of Counter Terrorism entitled, “The Application of PROACT® RCA to Terrorism/Counter Terrorism Related Events.”

Recent Posts

5 Root Cause Analysis Examples That Shed Light on Complex Issues

Root Cause Analysis with 5 Whys Technique (With Examples)

What Is Fault Tree Analysis (FTA)? Definition & Examples

Guide to Failure Mode and Effects Analysis (FMEA)

Root Cause Analysis Software

Our RCA software mobilizes your team to complete standardized RCA’s while giving you the enterprise-wide data you need to increase asset performance and keep your team safe.

Get Free Team Trial

Root Cause Analysis Training

Your team needs a common methodology and plan to execute effective RCA's. With both in-person and on-demand options, our expert trainers will align and equip your team to complete RCA's better and faster.

View RCA Courses

Reliability's root cause analysis training and RCA software can quickly help your team capture ROI, increase asset uptime, and ensure safety.

Contact us for more information: