Is System’s Thinking Critical to Root Cause Analysis’s (RCA) Success?

Is System’s Thinking Critical to Root Cause Analysis’s (RCA) Success?

Put another way, can RCA be successful without the incorporation of system’s thinking? What prompted this question was that according to Drs. Leveson and Dekker in their 2014 paper Get To The Root of Accidents, systems thinking is not currently utilized in the application of RCA.

In this paper they cite:

“An often-claimed ‘fact’ is that operators or maintenance workers cause 70–90% of accidents. It is certainly true that operators are blamed for 70–90%”.

There are no citations for these statistics in the paper, but those numbers certainly do not reflect my experience in facilitating RCA’s for the past three decades. In fact, if an ‘RCA’ concludes with a component cause or by blaming a decision-maker, it is NOT an RCA in my eyes.

In this paper, I will use the same definition of system’s thinking they do in their paper, because I absolutely agree with it:

“Systems thinking is an approach to problem solving that suggests the behavior of a system’s components only can be understood by examining the context in which that behavior occurs. Viewing operator behavior in isolation from the surrounding system prevents full understanding of why an accident occurred — and thus the opportunity to learn from it.”

I was speaking at an HPRCT conference (Human Performance, Root Cause & Trending) years ago when Dr. Dekker was speaking as well. I had read Dr. Dekker’s books for years and was really looking forward to seeing him present in person. During his presentation, to my surprise, he made it clear he saw little to no value in RCA as he referred to RCA as ‘obsolete and old-school’. This was also concerning as this conference was based in part, on RCA.

During his post-session Q&A, along with Dr. Todd Conklin, I asked them about this position because I thought maybe I misinterpreted it, and he reiterated that what I heard was correct. They went on to characterize ‘RCA’ as linear thinking, component-based and resulting in a single cause. Based on my experience this was a total mis-characterization of what true, effective RCA is when practiced properly in the field. I thought to myself, what would make such a brilliant researcher make that statement? So I started to try and figure it out (like a mini-RCA of my own).

While the paper cited was written in 2014, Dr. Dekker’s position must have changed because even in his paper he states, “Yet learning as much as possible from adverse events is an important tool in the safety engineering tool kit”. By his statements at HPRCT, he evidently changed his position.

My purpose in writing this paper is to tell my story, my perspective on what ‘RCA’ is in the world I operate in, because it does not match his characterization at all, but it does match his principles expressed in his cited paper. For full disclosure, my experience is not in research or academia but in the practical application of RCA on the front-lines.

Drs. Dekker and Leveson state that 70% to 90% of RCA’s conclude with Operator Error. That is not my experience at all. To ensure I am not living in a bubble, I polled veteran RCA analysts/colleagues via a LinkedIn (LI) post of the cited article. No one had a similar experience with the statistic provided. However, all agreed that there are many in our industry who DO stop at blaming operators and call their analysis an ‘RCA’. So this can certainly taint the perception of what RCA is, to those who do not practice it for a living. I discussed this in great detail on a post entitled, The Stigma of RCA: What’s In a Name?.

ARE RCA’S DONE ON INCIDENTS?

In my world of Reliability Engineering, RCA IS performed on the basis of system’s thinking. During event reconstruction, we are starting with the ‘Event’. I am of the belief that organizations do not do RCA’s on incidents per se, but we do RCA’s based on consequences. This is an important distinction because if the consequence (or potential consequence) is not severe enough, we are not likely to allocate the proper resources to analyzing it.

An example of this is ‘falls’ in hospitals. This is a very common occurrence and one of the top claims filed against hospitals. If there is a fall that results in harm, the hospital’s risk department is aware of the potential liability that surfaces through the filing of a claim. Under such conditions, a formal RCA is likely to be conducted.

Conversely, if a patient falls and there is no harm, an RCA will be a choice. The choice will usually be to not do an RCA, because it is not worth it as there will not likely be a claim (and scarce resources will not be distracted by the RCA). So everyone goes on their way.

My purpose with this story is that what determined whether or not we would do an RCA, was the consequence and not the incident. The consequence will usually have a business level impact. Same could be said for industry. If a critical pump fails and the spares kicks in (providing there is a functioning spare), there would be no loss of production (or minimal). Flip that story and if the pump fails and there is either no spare or the spare fails also, significant production will be lost and an RCA definitely will be conducted because of the business consequence.

RCA RECONSTRUCTION: A VISUALIZATION REWIND

In another blog I discuss at length how our PROACT RCA process works from the conceptual perspective to the applied perspective. That blog was entitled, Do Learning Teams Make RCA Obsolete? In this paper I will just cover the conceptual side of our approach to RCA. For those interested in reviewing extensive details, I invite you to review these involved video case studies:

1. Boiler Feed Water Pump Failure RCA

RCA vs RCFA

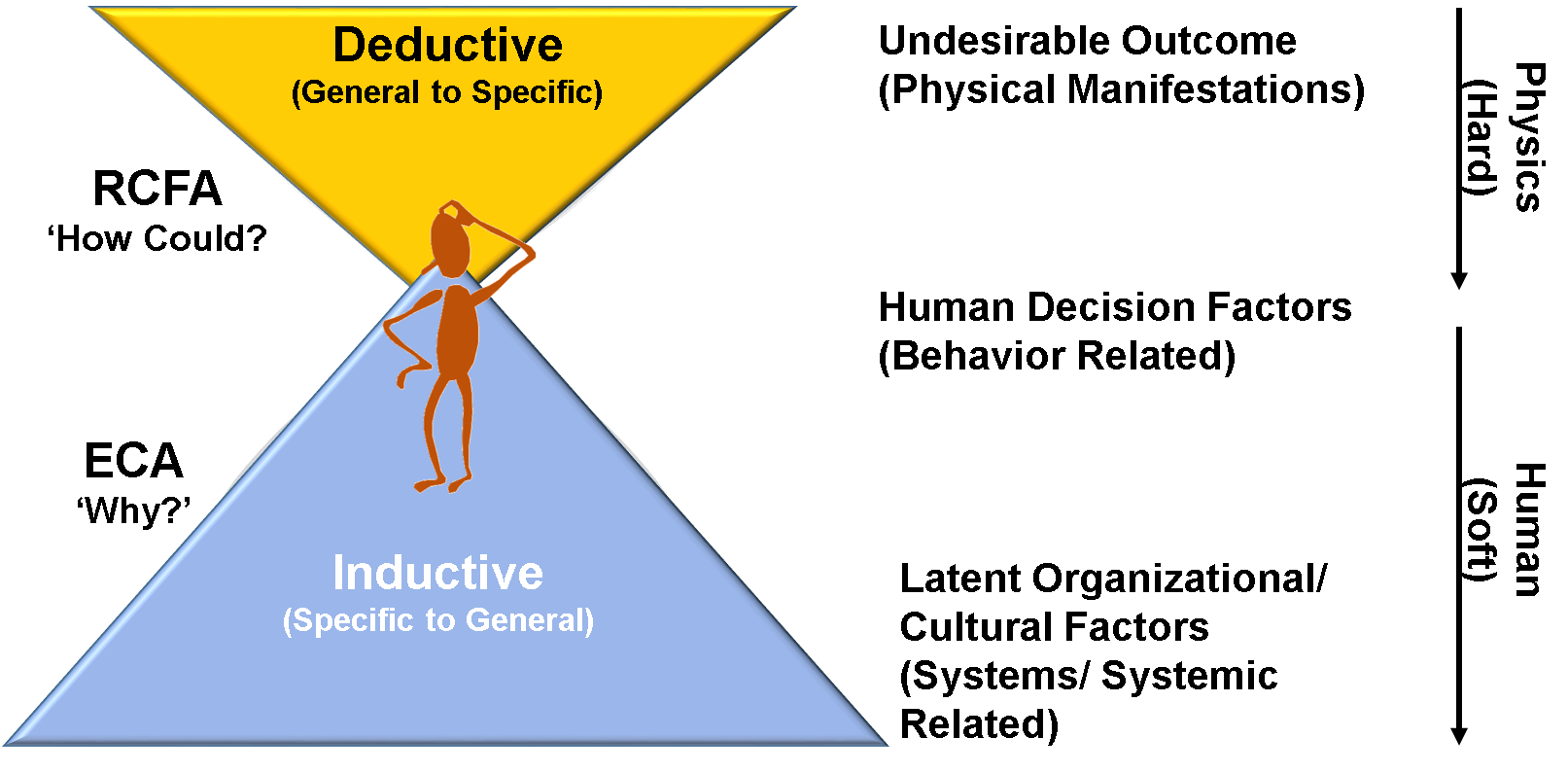

In Figure 1, I call this our hourglass perspective. This is because when working an Event (I use this term synonymously with Undesirable Outcome) backwards, we will first have to venture through the physics of the failure or the component-based or observable aspects, of the Event. I will admit, that many who conclude an investigation with causes related to components, will still call it an ‘RCA’. For me, if such an analysis concludes in this fashion it is called an RCFA, or Root Cause Failure Analysis. This is typically consistent with a metallurgical evaluation of failed components and there are no attempts to go deeper. At that point the organization would just implement corrective actions based on the metallurgical report.

During this phase of the RCA, we are utilizing deductive (general to specific) logic by asking the question ‘How Could?’ the facts from the scene (evidence collected) have come to be. Since we do not have the luxury of being able to ask equipment or inanimate objects ‘why’ they failed, we have to explore all the possibilities.

Think about the differences in responses to the questions of ‘How Could?’ vs ‘Why?’ For example, if I ask ‘How could a crime occur?’ vs ‘Why a crime occurred?’, is there a difference in the answers? How a crime occurred seeks to understand the physics of the crime, like the CSI type investigators view the crime scene. They are looking for the ‘how’.

However, the detectives and prosecutors are looking for the ‘why’ or the motive and the opportunity. The ‘how could’ questioning lends itself to the physics of the event and the ‘why’ lends itself to the human reasoning contributions to the event. As an FYI, in our PROACT approach we refer to the first observable consequence of a decision error, to be termed the ‘Physical Root(s)’.

Figure 1: The Conceptual View of RCA Using the PROACT RCA Methodology

RCA vs ECA (Error Cause Analysis)

The lower half of the hourglass represents the soft side of the event or the human and systems contributions. Where the two triangles overlap is at the point of the decision being made. The actual decision itself (one can call it inappropriate decision, decision error, or whatever one wants) is termed a Human Root per our RCA Methodology.

However, we do not focus on the decision-maker as the ‘cause’ of the event. Usually the decision-maker is the victim of flawed systems so the hunt continues for the flaws in those systems.

In Figure 1, focus on our decision-maker in the center of the graphic and it is at this point that we switch our line of questioning to ‘Why’. This is also where we switch from deductive to inductive logic. This is where we are interested in understanding why this decision-maker felt the decision(s) they made were appropriate at the time. We are essentially looking for their reasoning. What conditions were going on at the time that made it look like the right thing to do. Usually, most anyone else in the same position would have made the same decision. So we are looking for inputs into their reasoning process that made them think their decision was the right one.

This is the point in the RCA where we leave behind the physical sciences and enter the social sciences. This is also where oftentimes different skills are required. This is where we view the Human Performance Improvement (HPI) and Learning Team (LT) approaches coming into play. Since we have always incorporated this exploration into reasoning, we never considered it as new to do so. While the terms HPI and LT are relatively new, their concepts are not new to us. However, we totally agree with their purpose and intent.

It should be no surprise that technically-minded engineer’s shine when knee deep in broken parts and process data. However, those left-brained critical skills are not as effective when exploring the human brain and its reasoning capabilities. I will be the first to admit that those educated in the social sciences are more adept at understanding decision reasoning than the technically-minded metallurgical types.

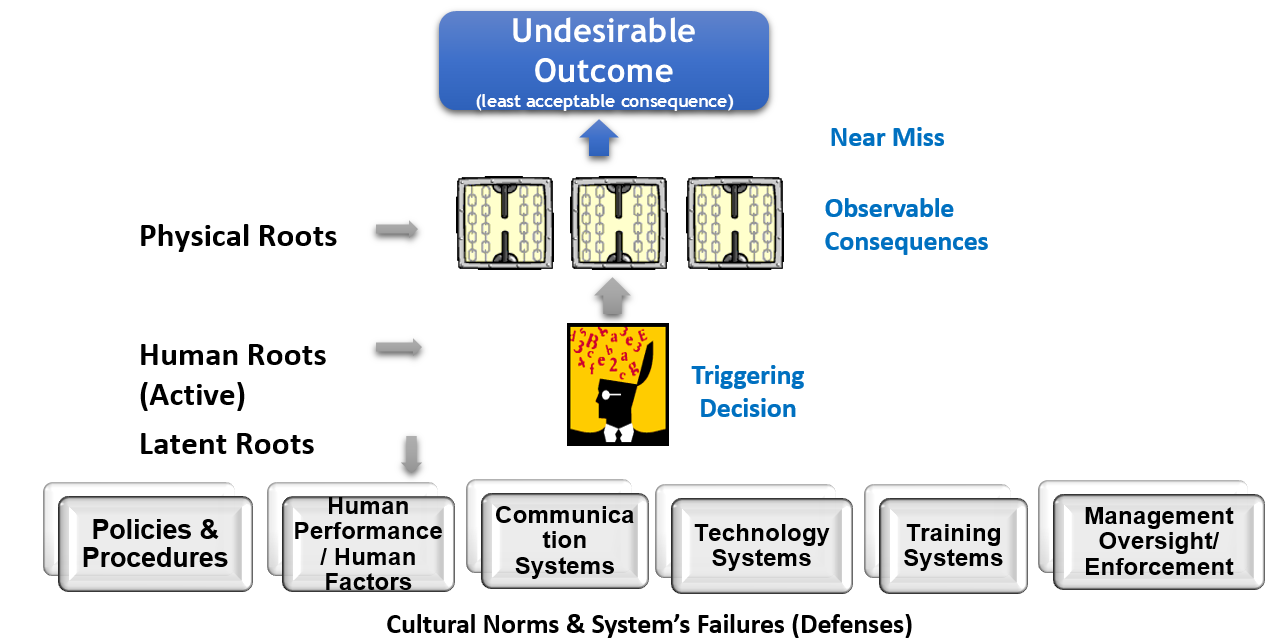

However, that does not change the need to explore decision-making and reasoning. They are critical to an effective RCA. In Figure 2, this is an expression of the germination of an undesirable outcome. If we start from the bottom, we see this is the foundation of our organization; it is the systems put into place in order to promote and support the best decision-making possible. As a result of the decision, it triggers observable consequences in the form of cause-and-effect chains. If we do not observe and break those chains (which are commonly called near misses), then we will likely hit a corporate or regulatory ‘trigger’ (threshold) and suffer a notable consequence. When this chain completes, normally a formal RCA will be commissioned and suits will show up.

As I spoke earlier about incidents vs consequences, this is visually captured here. If I break the chain and it is deemed a near miss, we will not suffer the consequence. For example our predictive maintenance effort, say using infrared imaging, detects overheated fuses in a motor control center (MCC). As a result, the MCC is shutdown on a scheduled basis and the fuses replaced. Will a formal RCA be conducted…likely not as there was no adverse consequence. Should an RCA be conducted to find out why the fuses overheated? Absolutely. The ‘error chain’ that led to the overheated fuses, still exists. The potential causes are latent, or dormant, just waiting for the next opportunity to recur.

Figure 2: Germination of an Undesirable Outcome

In Figure 2, I list examples of organizational systems. The standard ones are listed like policies and procedures as well as training, but I want to expand on the others to broaden our view of what other systems impact decision-making.

Human Performance – These are attributes of the human being that could have contributed to their decision-making. For example; illness, fatigue, boredom, inadequate motivation, cognitive overload, fear of failure, poor supervision and the taking of shortcuts.

Human Factors – These are attributes of the environment in which the human works. For example the work station layout conforming to how the human does work, the equipment they use makes it easier for them to do their jobs and to not cause harm to their bodies (i.e. – unnecessary strain to muscles, eyes).

Communication Systems – These are the means and messages in how we communication with others and system. These could be simple messaging with other employees via email, text, phone, verbal discussions, body language and even hand signals. This could also include how we interact with machines such as data entry into Computerized Maintenance Management Systems (CMMS) and Electronic Medical Record (EMR) systems. Of course machine-to-machine communication is included like Programmable Logic Controls (PLC).

Technology Systems – This deals with understanding the introduction of new technologies into the work environment and how adaptable it is to those who work in those areas. Are those who are supposed to use such technologies properly trained in them? Are our policies and procedures updated to reflect such new technologies? Design flaws can also be under this heading as we often are introducing new technologies because existing technologies are obsolete, but many may not be aware of that fact. It could be the norm that we are operating beyond our design parameters to meet production demands.

Training Systems – Are our training systems keeping pace with the introduction of new technologies, new systems, new equipment and new management ideologies. Take for instance Human Performance Improvement or HPI. When applied properly it is a proactive tool. It is meant to interact with and educate those about identifying existing and potential hazards in their workplaces. While HPI skills are necessary to look at bad outcomes in hindsight, it would be great to use this same tool for foresight to prevent bad consequences.

Management Oversight – This attempts to understand how we enforce the systems that we have so they are more effective. We often see in RCA, where analysts conclude that someone was not qualified to be in the position they were in. When that situation occurs, we have to better understand how that unqualified person was permitted to be in that job? Where was the oversight that allowed an unqualified person to be in that job, that day? We must be fair and balanced in these RCA’s and let the evidence lead the analysis, not any biases by the facilitator or those involved.

Dr. Dekker also states the following “Management and systemic causal factors, for example, pressures to increase productivity, are perhaps the most important to fix in terms of preventing future accidents — but these are also the most likely to be left out of accident reports”. As you can see from my explanation, this is standard practice to veteran investigators. We absolutely agree with his statement.

Without delving into decision-making and decision reasoning to uncover system flaws, we certainly would be playing a reactive game of ‘whack-a-mole’ just trying to analyze failures as they continue to crop up and recur.

WHERE IS THE DISAGREEMENT?

In Drs. Dekker and Leveson’s paper cited, I struggle to find any disagreement with how we have been conducting RCA for the past 35 years and what they feel RCA should be. This is evidenced in our text entitled, Root Cause Analysis: Improving Performance for Bottom-Line Results which was originally published in 1999. It is also evidenced in Charles Latino’s book, Strive for Excellence: The Reliability Approach published in 1980.

Drs. Dekker and Leveson state the following:

There are three levels of analysis for an incident or accident:

• What — the events that occurred, for example, a valve failure or an explosion;

• Who and how — the conditions that spurred the events, for example, bad valve design or an operator not noticing something was out of normal bounds; and

• Why — the systemic factors that led to the who and how, for example, production pressures, cost concerns, flaws in the design process, flaws in the reporting process, and so on.

To translate that into ‘PROACT RCA speak’:

1. What = Event/Undesirable Outcome

2. How = Physical Root Causes (Physics)

3. Who = Human Root Causes (Decisions/Choices)

4. Why = Latent Root Causes (Systems)

What I have described thus far about our approach to RCA absolutely requires all of these levels noted, to be applied to produce a successful and effective RCA. Granted that while we have always applied system’s thinking to our PROACT RCA approach, we are acutely aware of how RCA’s in general, are abused. I would also like to note that while I am only conveying RCA experience with our PROACT Methodology, my peers in this space also practice it very similarly.

When RCA is viewed as a commodity where everyone does it the same because they call it ‘RCA’, then I can see how ‘RCA’ would be viewed as ineffective. If all RCA is characterized as the equivalent of the 5-Whys, that would certainly be the case.

However, RCA as described in this article, is being practiced by many in progressive organizations around the world. As Futurist Joel Barker said in his 1980’s video, The Business of Paradigms, “those who say something is impossible should get out of the way of those who are doing it”.

I have the greatest respect for Drs. Dekker and Leveson and follow many of their writings. I think this particular paper I cited, demonstrates we have unity in purpose but perhaps we are just reading from different dictionaries?

About the Author

Robert (Bob) J. Latino is former CEO of Reliability Center, Inc. a company that helps teams and companies do RCAs with excellence. Bob has been facilitating RCA and FMEA analyses with his clientele around the world for over 35 years and has taught over 10,000 students in the PROACT® methodology.

Bob is co-author of numerous articles and has led seminars and workshops on FMEA, Opportunity Analysis and RCA, as well as co-designer of the award winning PROACT® Investigation Management Software solution. He has authored or co-authored six (6) books related to RCA and Reliability in both manufacturing and in healthcare and is a frequent speaker on the topic at domestic and international trade conferences.

Bob has applied the PROACT® methodology to a diverse set of problems and industries, including a published paper in the field of Counter Terrorism entitled, “The Application of PROACT® RCA to Terrorism/Counter Terrorism Related Events.”

Recent Posts

5 Root Cause Analysis Examples That Shed Light on Complex Issues

Root Cause Analysis with 5 Whys Technique (With Examples)

What Is Fault Tree Analysis (FTA)? Definition & Examples

Guide to Failure Mode and Effects Analysis (FMEA)

Root Cause Analysis Software

Our RCA software mobilizes your team to complete standardized RCA’s while giving you the enterprise-wide data you need to increase asset performance and keep your team safe.

Get Free Team Trial

Root Cause Analysis Training

Your team needs a common methodology and plan to execute effective RCA's. With both in-person and on-demand options, our expert trainers will align and equip your team to complete RCA's better and faster.

View RCA Courses

Reliability's root cause analysis training and RCA software can quickly help your team capture ROI, increase asset uptime, and ensure safety.

Contact us for more information: