Germination of a Failure-Why Does Stuff Really Break Down? (Q&A Part 2)

I recently presented a webinar for SMRP and Empowering Pumps, on the title above. There were several questions, post-presentation, that I felt were worthy of expanding on in the form of a blog.

Question #2

How do you manage a situation where people just decide not to use simple tools like RCFA, just comfortable doing things same way? Even when you keep driving it…

Response: I think we can all relate to this question (and this practice). If there is no RCA policy and/or procedure outlining the specific expectations of what is required by leadership, than analysts will naturally migrate to the minimal requirements (boundaries).

This often results in being ‘compliant’ which does not necessarily ensure a bottom-line benefit. I see this often where success is to meet some minimum regulatory requirement and not to yield a measurable benefit to the organization (i.e. – production increases, injury reductions, maintenance cost reductions, inventory reductions, etc.).

I don’t blame as much the analysts for producing poor RCA’s as I do those who accept them. If I perform a ‘shallow cause analysis’ (SCA) where I just met the minimal requirements of some standard, and the powers that be accept it…then they have lowered the standard themselves.

This is often referred to as Normalization of Deviation. We are often time-pressured, so we take a short cut in our RCA efforts. As a result, when there is no negative consequence, that short cut becomes the new normal. This reiterative process continues until we have a catastrophe and then only in hindsight, it becomes clear. This drifting, or gradual decline of our standards promotes a culture of ‘forgetting to be afraid‘.

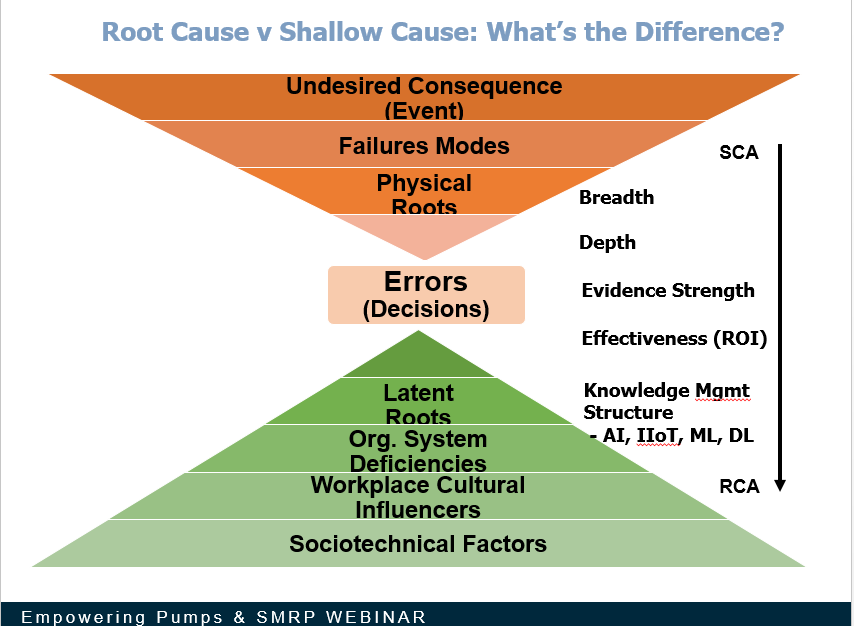

In Figure 1 I show a chart I often use, I call it the ‘hourglass’ view of RCA. In the orange, deductive phase of RCA we continually ask ‘How Could?’ the previous node have occurred, and we use our evidence to prove or disprove it. Inevitably, we will find some type of physical root cause (the physics portion of the analysis) which is tangible.

Figure 1. Root Cause v Shallow Cause Analysis

If we continue to drill down with our questioning, we will eventually get to a human root cause or decision error. This will either be an error of omission or commission, but normally this is a decision made with the best of intentions. It is not important ‘who’ made the inappropriate decision, but why they thought it was the right decision at that time, is really what is important.

When we get to decision errors our questions shift from deductive (How Could?) to inductive, using ‘Why?’ I don’t want to know ‘how could’ someone have made such a decision error, I want to know ‘Why’. We have to understand their reasoning because they did not intend on that outcome, they felt the decision was correct. Why did they think that?

Drilling down beneath the decision-maker will start to yield the true contributions to inappropriate decisions…our latent roots or management system deficiencies, cultural norms and socio-technical contributors. These are the inputs into the human mind that were helping form the decisions made. This is much easier to discern in hindsight, but much more difficult to do proactively when looking at potential risks.

When looking at this spectrum of ‘where to stop’, we can ask ourselves these questions:

- If we stop at some physical level (which is Root Cause Failure Analysis or RCFA) and just replace a broken part or change the vendor, will the problem go away? NO

- If we stop at the decision-maker and discipline them, will the problem go away? NO

- If we address the latent root causes, the system inputs to human reasoning, are we more likely to get an appropriate decision next time? YES

So to answer the original question, if we permit analysts to use less than appropriate tools for the failure at hand, than we are part of the problem. If Figure 1, we show the spectrum of analysis where if we just stop at replacing parts, that is shallow cause analysis. If we look at RCA as a comprehensive and critical system we will not only seek the true latent causes, but we will seek to collect, aggregate and leverage that learning in the form of a corporate RCA knowledge base! In the long run, such raw logic can be used in internal Artificial Intelligence (AI) and IIoT (Industrial Internet of Things) efforts.

I thank SMRP and Empowering Pumps for the opportunity to present to their participants and more importantly, I thank the participants for their time and interest.

FYI. For access to this webinar, it is apparently free to SMRP members and there is a $35 fee for non-SMRP members: https://portal.smrp.org/eweb/shopping/shopping.aspx?site=smrp&webcode=shopping&shopsearch=germ&prd_key=bf7268ce-edcd-4e7a-b1a2-daf53a5f8069

About the Author

Robert (Bob) J. Latino is former CEO of Reliability Center, Inc. a company that helps teams and companies do RCAs with excellence. Bob has been facilitating RCA and FMEA analyses with his clientele around the world for over 35 years and has taught over 10,000 students in the PROACT® methodology.

Bob is co-author of numerous articles and has led seminars and workshops on FMEA, Opportunity Analysis and RCA, as well as co-designer of the award winning PROACT® Investigation Management Software solution. He has authored or co-authored six (6) books related to RCA and Reliability in both manufacturing and in healthcare and is a frequent speaker on the topic at domestic and international trade conferences.

Bob has applied the PROACT® methodology to a diverse set of problems and industries, including a published paper in the field of Counter Terrorism entitled, “The Application of PROACT® RCA to Terrorism/Counter Terrorism Related Events.”

Recent Posts

5 Root Cause Analysis Examples That Shed Light on Complex Issues

Root Cause Analysis with 5 Whys Technique (With Examples)

What Is Fault Tree Analysis (FTA)? Definition & Examples

Guide to Failure Mode and Effects Analysis (FMEA)

Root Cause Analysis Software

Our RCA software mobilizes your team to complete standardized RCA’s while giving you the enterprise-wide data you need to increase asset performance and keep your team safe.

Get Free Team Trial

Root Cause Analysis Training

Your team needs a common methodology and plan to execute effective RCA's. With both in-person and on-demand options, our expert trainers will align and equip your team to complete RCA's better and faster.

View RCA Courses

Reliability's root cause analysis training and RCA software can quickly help your team capture ROI, increase asset uptime, and ensure safety.

Contact us for more information: